Y4K?

Story Highlights

What should come after HDTV? There’s certainly a lot of buzz about 3D TV. Such directors as James Cameron and Douglas Trumbull are pushing for higher frame rates. Several manufacturers have introduced TVs with a 21:9 (“CinemaScope”) aspect ratio instead of HDTV’s 16:9. Some think we should increase dynamic range (the range from dark to light). Some think it should be a greater range of colors. Japan’s Super Hi-Vision offers 22.2-channel surround sound. And then there’s 4K.

In simple terms, 4K has approximately twice as much detail as HDTV in both the horizontal and vertical directions. If the orange rectangle above is HDTV, the blue one is roughly 4K. It’s called 4K because there are 4096 picture elements (pixels) per line.

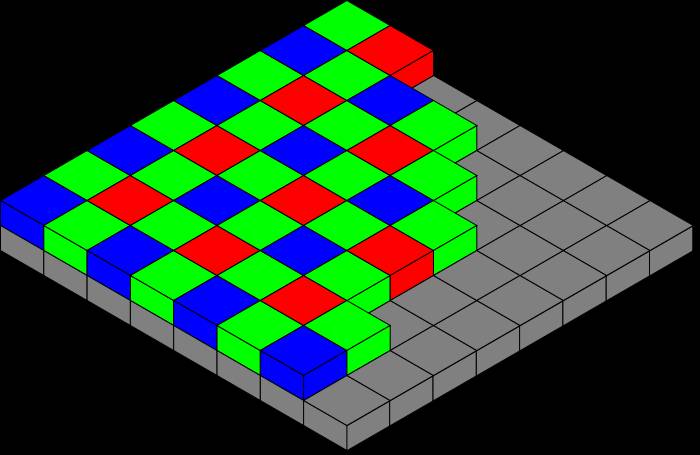

This post will not get much more involved with what 4K is. The definition of 4096 pixels per line says nothing about capture or display. Even at lower resolutions, some cameras use a complete image sensor for each primary color; others use some sort of color filtering on a single image sensor. At left is Colin Burnett’s depiction of the popular Bayer filter design. Clearly, if such a filtered image sensor were shooting another Bayer filter offset by one color element, the result would be nothing like the original.

This post will not get much more involved with what 4K is. The definition of 4096 pixels per line says nothing about capture or display. Even at lower resolutions, some cameras use a complete image sensor for each primary color; others use some sort of color filtering on a single image sensor. At left is Colin Burnett’s depiction of the popular Bayer filter design. Clearly, if such a filtered image sensor were shooting another Bayer filter offset by one color element, the result would be nothing like the original.

Optical filtering and “demosaicking” algorithms can reduce color problems, but the filtering also reduces resolution. Some say a single color-filtered image sensor with 4096 pixels per line is 4K; others say it isn’t. That’s an argument for a different post. This one is about why 4K might be considered useful.

An obvious answer is for more detail resolution. But maybe that’s not quite as obvious as it seems at first glance. The history of video technology certainly shows ever-increasing resolutions, from eight scanning lines per frame in the 1920s to HDTV’s….

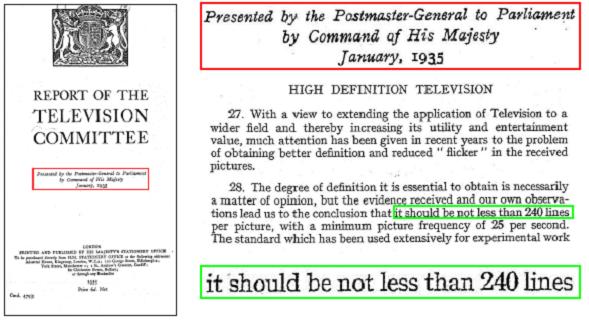

As can be seen above, in 1935, a British Parliamentary Report declared that HDTV should have no fewer than 240 lines per frame. Today’s HDTV has 720 or 1080 “active” (picture-carrying) lines per frame, and 4K has a nominal 2160, but even ordinary 525-line (~480 active) TV was considered HDTV when it was first introduced.

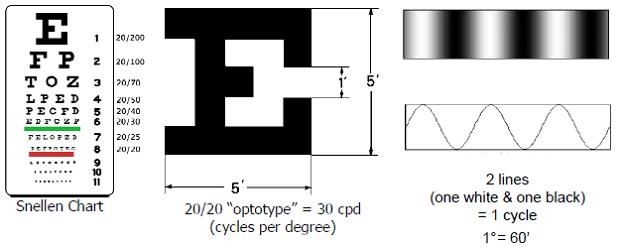

Human visual acuity is often measured with a common Snellen eye chart, as shown at left above. On the line for “normal” vision (20/20 in the U.S., 6/6 in other parts of the world), each portion of the “optotype” character occupies one arcminute (1′, a sixtieth of a degree) of retinal angle, so there are 30 “cycles” of black and white lines per degree.

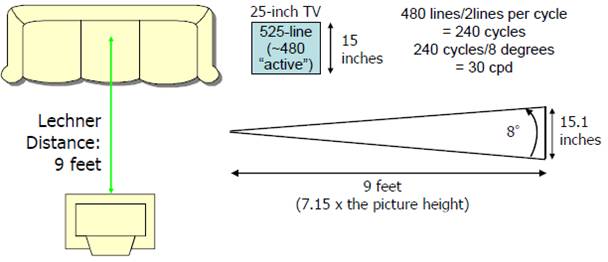

Bernard Lechner, a researcher at RCA Laboratories at the time, studied television viewing distances in the U.S. and determined they were about nine feet (Richard Jackson, a researcher at Philips Laboratories in the UK at the same time, came up with a similar three meters). As shown above, a 25-inch 4:3 TV screen provides just about a perfect match to “normal” vision’s 30 cycles per degree when “525-line” television is viewed at the Lechner Distance — roughly seven times the picture height.

HDTV should, under the same theory, be viewed from a smaller multiple of the screen height (h). For 1080 active lines, it should be 7.15 x 480/1080, or about 3.2h. Looked at another way, at a nine-foot viewing distance, the height should be about 34 inches, a diagonal screen size of about 60 inches, and, indeed, 60-inch (and larger) HDTV screens are not uncommon (and so are closer viewing distances).

For 4K (again, using the same theory), it should be a screen height of about 68 inches. Add a few inches for a screen bezel and stand, and mount it on a table, and suddenly the viewer needs a minimum ceiling height of nine feet!

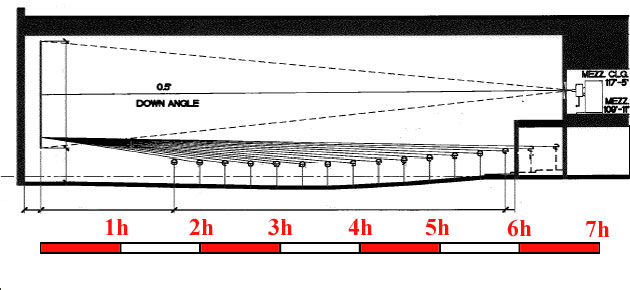

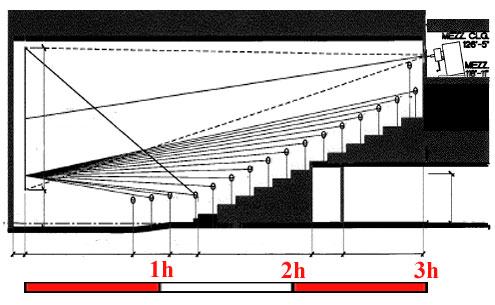

Of course, cinema auditoriums don’t have domestic ceiling heights. Above is an elevation of a typical old-style auditorium, courtesy of Warner Bros. Technical Operations. The scale is in picture heights. Back near the projection booth, standard-definition resolution seems adequate. Even in the fifth row, HD resolution seems adequate. Below, however, is a modern, stadium-seating cinema auditorium (courtesy of the same source).

This time, even a viewer with “normal” vision in the last row could see greater-than-HD detail, and 4K could well serve most of the auditorium. That’s one reason why there’s interest in 4K for cinema distribution.

This time, even a viewer with “normal” vision in the last row could see greater-than-HD detail, and 4K could well serve most of the auditorium. That’s one reason why there’s interest in 4K for cinema distribution.

Another is questions about that theory of “normal” vision. First of all, there are lines on the Snellen eye chart (which dates back to 1862) below the “normal” line, meaning some viewers can see more resolution.

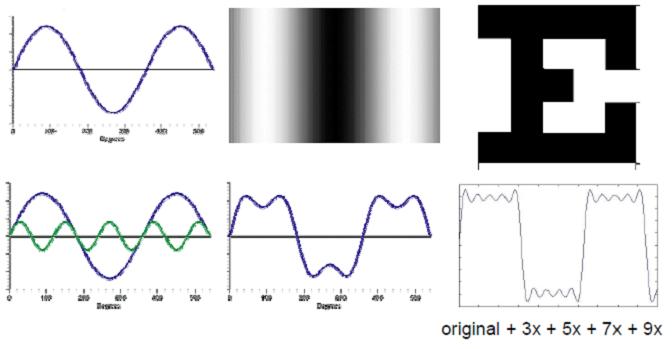

Then there are the sharp lines of the optotypes. A wave cycle would have gently shaded transitions between white and black, which might make the optotype more difficult to identify on an eye chart. Adding in higher frequencies, as shown below, makes the edges sharper, and 4K offers higher frequencies than does HD.

Then there are the sharp lines of the optotypes. A wave cycle would have gently shaded transitions between white and black, which might make the optotype more difficult to identify on an eye chart. Adding in higher frequencies, as shown below, makes the edges sharper, and 4K offers higher frequencies than does HD.

Then there’s sharpness, which is different from resolution. Words that end in -ness (brightness, loudness, sharpness, etc.) tend to be human psychophysical sensations (psychological responses to physical stimuli) rather than simple machine-measurable characteristics (luminance, sound level, resolution, contrast, etc.). Another RCA Labs researcher, Otto Schade, showed that sharpness is proportional to the square of the area under a modulation-transfer function (MTF) curve, a curve plotting contrast ratio against resolution.

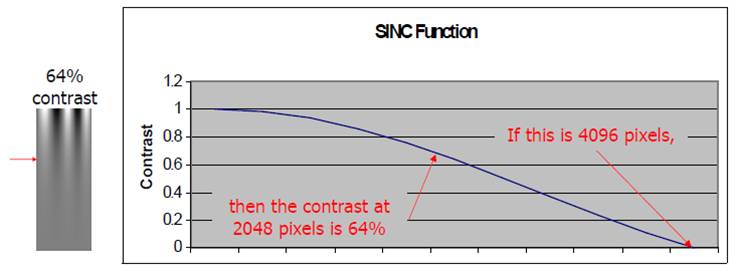

One of the factors affecting an MTF curve is the filtering inherent in sampling, as is done in imaging. An ideal filter might use a sine of x divided by x function, also called a SINC function. Above is a SINC function for an arbitrary image sensor and its filters. It might be called a 2K sensor, but the contrast ratio at 2K is zero, as shown by the red arrow at the left.

One of the factors affecting an MTF curve is the filtering inherent in sampling, as is done in imaging. An ideal filter might use a sine of x divided by x function, also called a SINC function. Above is a SINC function for an arbitrary image sensor and its filters. It might be called a 2K sensor, but the contrast ratio at 2K is zero, as shown by the red arrow at the left.

Above is the same SINC function. All that has changed is a doubling of the number of pixels (in each direction). Now the contrast ratio at 2K is 64%, a dramatic increase (again, as shown by the red arrow at the left). Of course, if the original sensor offered 64% at 2K, the improvement offered by 4K would be much less dramatic, a reason why the question of what 4K is is not trivial.

Above is the same SINC function. All that has changed is a doubling of the number of pixels (in each direction). Now the contrast ratio at 2K is 64%, a dramatic increase (again, as shown by the red arrow at the left). Of course, if the original sensor offered 64% at 2K, the improvement offered by 4K would be much less dramatic, a reason why the question of what 4K is is not trivial.

Then there’s 3D. Some of the issues associated with 3D shooting relate to the use of two cameras with different image sensors and processing. One camera might deliver different gray scale, color, or even geometry from the other.

Above is an alternative, two HD images (one for each eye’s view) on a single 4K image sensor. A Zepar stereoscopic lens system on a Vision Research Phantom 65 camera serves that purpose. It’s even available for rent.

Above is an alternative, two HD images (one for each eye’s view) on a single 4K image sensor. A Zepar stereoscopic lens system on a Vision Research Phantom 65 camera serves that purpose. It’s even available for rent.

There are other reasons one might want to shoot HD-sized images on a 4K sensor. One is image stabilization. The solid orange rectangle above represents an HD image that has been jiggled out of its appropriate position, the lighter orange rectangle behind it with the dotted border. There are many image-stabilization systems available that can straighten out a subject in the center, but they do so by trimming away what doesn’t fit, resulting in the smaller, green rectangle. If a 4K sensor is used, however, the complete image can be stabilized.

There are other reasons one might want to shoot HD-sized images on a 4K sensor. One is image stabilization. The solid orange rectangle above represents an HD image that has been jiggled out of its appropriate position, the lighter orange rectangle behind it with the dotted border. There are many image-stabilization systems available that can straighten out a subject in the center, but they do so by trimming away what doesn’t fit, resulting in the smaller, green rectangle. If a 4K sensor is used, however, the complete image can be stabilized.

It’s not just stabilization. An HD-sized image shot on a 4K sensor can be reframed in post production. The image can be moved left or right, up or down, rotated, or even zoomed out.

So 4K offers much even to people not intending to display 4K. But it comes at a cost. Cameras and displays for 4K are more expensive, and an uncompressed 4K signal has more than four times as much data as HD. If the 1080p60 (1080 active lines, progressively scanned, at roughly 60 frames per second) version of HD uses 3G (three-gigabit-per-second) connections, 4K might require four of those.

When getting 4K to cinemas or homes, however, compression is likely to be used, and, as can be seen by the MTF curves, the highest-resolution portion of the image has the least contrast ratio. It has been suggested that, in real-world images, it might take as little as an extra 5% of data rate to encode the extra detail of 4K over HD.

So, is 4K the future? The aforementioned Super Hi-Vision is already effectively 8K, and it’s scheduled to be used in next year’s Olympic Games.