Live From CES 2018: New Intel-Ferrari Deal Leverages AI, Drones for Motorsports Coverage

Sports plays a major role at the tech company’s booth, in its plans for the future

Story Highlights

Anyone who saw Intel CEO Brian Krzanich’s Opening Keynote on Tuesday or has visited the company’s jam-packed booth (#100048) at CES 2018 can see that live sports coverage is at the core of the company’s plans for the future. Whether it’s highlighting Intel technology’s extensive role in producing the 2018 PyeongChang Olympics or bringing in NFL analyst and former Dallas Cowboys QB Tony Romo to promote its True View immersive 3D technology, sports is certainly the headline for Intel at CES.

In Intel’s demo at CES 2018, the viewer can select a specific driver and create a personal feed of the race personalized around that driver with live footage captured by drones.

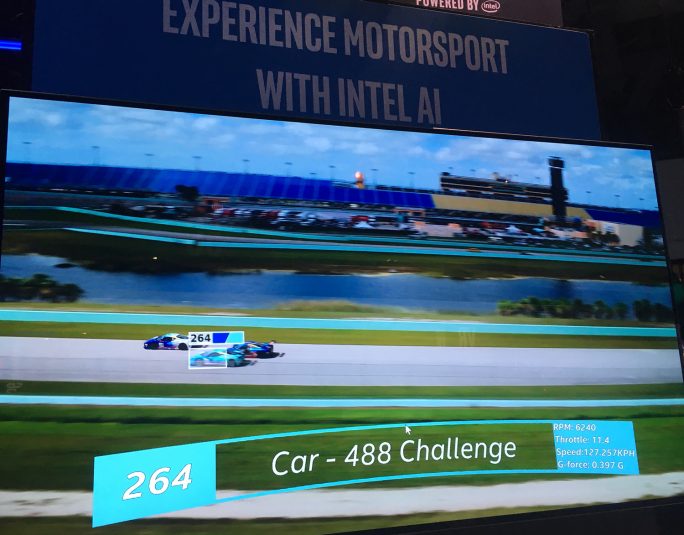

However, Intel’s most intriguing sports storyline at CES may be its newly announced three-year partnership with Ferrari North America to bring the power of artificial intelligence to the Ferrari Challenge North America Series. Beginning this year, race broadcasts will use AI technologies, including the Intel Xeon Scalable platform and neon Framework, to enhance the motorsports experience for spectators and transform the way fans view live motorsports.

According to Intel, CES is the first time the company is showcasing these techniques and the first time that the power of artificial intelligence has been applied to enhance racing coverage. A demo at Intel’s booth illustrates the ability of AI to recognize actions or automatically detect and identify objects by showing the identification of a car passing another car on the racetrack.

AI, Drones Combine To Create Customized Feed, Deeper Insights

Intel is using its AI technology to derive deeper insights from data collected during races and use that data to augment the broadcast experience for fans. Combined with aerial footage captured via drone-mounted cameras, AI will allow broadcasters to analyze races in real time and provide help deepen the experience for fans watching a Ferrari Challenge North America broadcast.

Intel AI products, including Intel Xeon Scalable technology, will run inference on video captured by drones to apply object-identification models and tag video captures. Algorithms will then generate storylines about drivers to help make the race more compelling. Machine-learning technology will also be used to provide driving insights, which drivers can use to make adjustments that improve their overall race results. Over time, these models will be used to ingest telemetric data — steering angle, throttle pressure, braking pressure — to understand a racer’s driving style and predict track performance.

In the demo footage at Intel’s booth, multiple drone-camera feeds are captured and cut together with Intel’s AI technology, and key moments during the race are identified. In addition, the viewer can select a specific driver and create a feed personalized around that driver. In addition, AI technology will also alert the viewer about key events in the race not involving a favorite driver, and the viewer can play back these highlights at their convenience.

Benefits for Viewer, Driver, Analyst

Until now, telemetric data has been analyzed after the race. Real-time analysis is nearly impossible for a human to do outside of one or two vectors. Artificial intelligence can continuously analyze data streams to find unexpected insights that the driver can use to improve lap time.

AI makes it possible to create comparisons of different drivers or laps based on object-detection data from the video feed. For example, artificial intelligence could compare the entry and exit angles that a driver takes in turns from lap to lap or compare across different drivers to identify which turns were fastest.

In addition to the AI motorsports demo, Intel has dedicated a sizable chunk of its booth to highlight its role as a technology provider at the 2018 PyeongChang Olympics.

In addition to allowing the viewer to customize the race experience, Intel believes that the AI system will help broadcast analysts. Instead of the analyst’s checking the same handful of metrics over and over, AI can automatically recognize and identify subtle variations that may go unnoticed by a human analyst.

Odds and Ends: Intel and Sports at CES

Intel’s booth features demo stations highlighting company plans for next month’s Winter Olympics in South Korea, including stations focused on drones, VR, and 5G.

In addition, the company is showcasing its True View immersive media platform (previously called freeD and rebranded at CES), which creates multi-perspective, 360-degree replays and is used for the popular Be The Player feature. Now installed in 11 NFL stadiums, the technology’s capability to create replays from any angle has become a key element in both NFL telecasts and the in-venue video board experience.