What's Next?

These were some of the things that could be seen at Canon Expo at New York’s Javits Convention Center last week: ice skaters pirouetting without ice, people viewing someone dressed as the Statue of Liberty from the moving deck of a fake boat, a machine that can squirt out a printed-and-bound book on demand, and a hand-holdable x-ray system. Those weren’t directly related to the future of our business. But what about image sensors with 120 million pixels, others (sensor chips) larger than paperback books, and yet others with more colors than merely red, green, and blue?

[The photo above, by the way, like the others in this post from Canon Expo, was shot by Mark Forman <http://screeningroom.com/> and is used here with his permission (all other rights reserved).]

[The photo above, by the way, like the others in this post from Canon Expo, was shot by Mark Forman <http://screeningroom.com/> and is used here with his permission (all other rights reserved).]

We can extrapolate from the past to make certain predictions. It’s extremely likely, for example, that the sun will rise tomorrow (or, for those of a less-poetic bent, that the rotation of the Earth will cause…). Otherwise, we can’t predict the future, but we’re often put in a position of having to do so: Will this stock go up? Will it rain on during an outdoor wedding ceremony? Will there be a better, less-expensive camera/computer/etc. after a purchase?

That last is usually as assured as a daily sunrise, but how quickly and how great the improvement are hard to know. For help, there are blogs like this, publications, conferences, and trade shows.

The Internationale Funkausstellung (IFA) in Berlin is an example of one of the latter. It’s an international consumer electronics show.

The Internationale Funkausstellung (IFA) in Berlin is an example of one of the latter. It’s an international consumer electronics show.

At the latest IFA, among other stereoscopic 3D offerings (including 58-inch, CinemaScope-shaped, 21:9 glasses-based 3D), Philips spinoff Dimenco showed an auto-stereoscopic (no-glasses) 3D display. Here’s a portion of a photo of it that appeared on TechRadar’s site here: http://www.techradar.com/news/television/hdtv/philips-to-launch-glasses-free-3d-tv-in-2013-713951

This is by no means the first time Philips has ventured into no-glasses 3D, but this one is different. Autostereoscopic displays usually involve a number of views, and the display resolution gets divided by them. The more views, the larger the viewing sweet spot and the better the 3D but the lower the resolution. The new display has five views horizontally and three vertically, but it starts with twice as much resolution as “full 1080-line HD” both horizontally and vertically, so the 3D images end up with a respectable 768 x 720 for each of 15 views.

Perhaps such glasses-free 3D leads to a greater sensation of immersion, but there are other ways to create (or increase) an immersive sensation. Consider, for example, the CAVE (Cave Automatic Virtual Environment), a room with stereoscopic projections on at least three walls and the floor (sometimes all surfaces). The photo here is of a CAVE at the University of Illinois in 2001 (it was developed there roughly 10 years earlier). SGI brought a CAVE to the National Association of Broadcasters convention shortly after it was developed.

Perhaps such glasses-free 3D leads to a greater sensation of immersion, but there are other ways to create (or increase) an immersive sensation. Consider, for example, the CAVE (Cave Automatic Virtual Environment), a room with stereoscopic projections on at least three walls and the floor (sometimes all surfaces). The photo here is of a CAVE at the University of Illinois in 2001 (it was developed there roughly 10 years earlier). SGI brought a CAVE to the National Association of Broadcasters convention shortly after it was developed.

Visitors who wore ordinary 3D glasses saw ordinary 3D — boring. Visitors who got to wear a special pair of 3D glasses that could track their head movements, however, even though they saw exactly the same 3D as the others, were transported into a virtual world responsive to their every movement. Unfortunately, only one viewer at a time could get the immersive experience.

At Canon Expo, however, there was “mixed reality.” It’s based on head-mounted displays using two prisms per eye. One, a special “free-form prism,” delivers images from a small display to the eye. The other passes “real-world” images from in front of the viewer to both the eye and a video camera that can tell what the viewer is looking at.

The result is definitely mixed reality, a combination of stereoscopic imagery with unprocessed vision, with the 3D virtual images conforming to objects and views in the “real world.” Virtual images can even be mapped onto real-world surfaces, with the cameras in the headgear telling the processors how to warp the virtual images appropriately. This photo shows a complex version of the headgear; other mixed-reality viewers at Canon Expo looked little different from some 3D glasses. Canon’s “interactive mixed reality” brochure showed people wearing the headgear walking around and collaboratively discussing an object that doesn’t exist.

The result is definitely mixed reality, a combination of stereoscopic imagery with unprocessed vision, with the 3D virtual images conforming to objects and views in the “real world.” Virtual images can even be mapped onto real-world surfaces, with the cameras in the headgear telling the processors how to warp the virtual images appropriately. This photo shows a complex version of the headgear; other mixed-reality viewers at Canon Expo looked little different from some 3D glasses. Canon’s “interactive mixed reality” brochure showed people wearing the headgear walking around and collaboratively discussing an object that doesn’t exist.

Another form of immersion involves capturing 360-degree images. At left is the Immersive Media Dodeca® 2360 camera system, combining the images from 11 different cameras and lenses into a seamless panorama. At Canon Expo, a 360-degree view was achieved with a single lens, a single imaging chip (8984 x 5792, with 3.2 μm pixel pitch) and a mirror shaped like a cross between a donut and a cone that is, in the words of one high-ranking Canon employee, “the single most-precise optical component the company makes.” The whole package forms a roughly fist-sized bump.

Another form of immersion involves capturing 360-degree images. At left is the Immersive Media Dodeca® 2360 camera system, combining the images from 11 different cameras and lenses into a seamless panorama. At Canon Expo, a 360-degree view was achieved with a single lens, a single imaging chip (8984 x 5792, with 3.2 μm pixel pitch) and a mirror shaped like a cross between a donut and a cone that is, in the words of one high-ranking Canon employee, “the single most-precise optical component the company makes.” The whole package forms a roughly fist-sized bump.

Of course, immersiveness is only one visual sensation. There are also sharpness and color.

If you work out the math on that Canon 360-degree image sensor, it comes to about 50 million pixels, which is considerably more than even NHK’s Super Hi-Vision (also known as ultra high-definition television, with four times the detail of 1920 x 1080 HDTV in both the horizontal and vertical directions).  Across the room from Canon’s 360-degree system, however, was their version of ultra-high resolution, with roughly eight times the detail of 1080-line HDTV in both directions.

Across the room from Canon’s 360-degree system, however, was their version of ultra-high resolution, with roughly eight times the detail of 1080-line HDTV in both directions.

Four Super Hi-Vision pictures could fit into one from this hyper-resolution sensor. Canon says its resolution is comparable to the number of human optic nerves.

The full detail of the chip can only (currently) be captured at only about 1.4 frames per second, but while it is shooting hyper-detailed stills, it can (if I interpreted the information provided correctly) simultaneously capture two full-motion full-detail HDTV streams within the image. The system uses a one-of-a-kind lens, and it’s a work in progress.

The hyper-resolution image sensor had a roughly full-frame 35mm format (comparable to that in the Canon EOS 5D Mark II DSLR), already roughly four-and-a-half times taller than a 2/3–inch format image sensor. A few feet away was another new sensor that was larger — much larger. It was made from a semiconductor wafer the size of a dinner plate, and the sensor itself was the size of an old 8-inch-square floppy disk — huge!

The hyper-resolution image sensor had a roughly full-frame 35mm format (comparable to that in the Canon EOS 5D Mark II DSLR), already roughly four-and-a-half times taller than a 2/3–inch format image sensor. A few feet away was another new sensor that was larger — much larger. It was made from a semiconductor wafer the size of a dinner plate, and the sensor itself was the size of an old 8-inch-square floppy disk — huge!

What do you get from such a huge sensor? Extraordinary sensitivity and dynamic range. One scene (said to have been shot at 60 frames per second with an aperture of f/6.8) showed stars in the sky as seen through a forest canopy — and it was easy to see that the leaves and needles of the trees were green. In another scene, a woman walks in front of a table lamp, so she is back lit, but every detail and shade of gray in of her front was clearly visible.

Canon Expo demonstrated advances in both immersiveness (aside from the 360-degree and mixed-reality systems, there was also the 9-meter dome projection shown at right) and in spatial sharpness (the hyper-resolution and giant image sensors, the latter because it can deliver more contrast ratio, which affects sharpness). There are also temporal sharpness (high frame rate) and spatio-temporal sharpness, both of which affect our perceptions of sharpness. I found no demonstrations of increased temporal or dynamic resolution at Canon Expo, but that doesn’t mean they’re not being developed.

Canon Expo demonstrated advances in both immersiveness (aside from the 360-degree and mixed-reality systems, there was also the 9-meter dome projection shown at right) and in spatial sharpness (the hyper-resolution and giant image sensors, the latter because it can deliver more contrast ratio, which affects sharpness). There are also temporal sharpness (high frame rate) and spatio-temporal sharpness, both of which affect our perceptions of sharpness. I found no demonstrations of increased temporal or dynamic resolution at Canon Expo, but that doesn’t mean they’re not being developed.

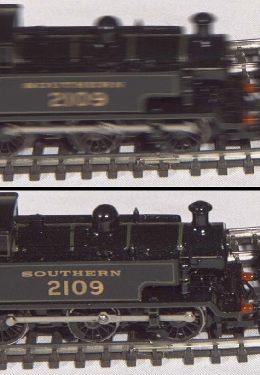

The images at left are portions taken from BBC R&D White Paper number 169 on “High Frame-Rate Television” published in September 2008. It’s available here: http://www.bbc.co.uk/rd/pubs/whp/whp169.shtml The upper picture shows a toy train shot at the equivalent of 50 frames per second; the lower picture shows the same train at 300-fps. Note that the stationary tracks and ties are equally sharp in both pictures, but the higher frame rate makes the moving train sharper in the lower picture.

The images at left are portions taken from BBC R&D White Paper number 169 on “High Frame-Rate Television” published in September 2008. It’s available here: http://www.bbc.co.uk/rd/pubs/whp/whp169.shtml The upper picture shows a toy train shot at the equivalent of 50 frames per second; the lower picture shows the same train at 300-fps. Note that the stationary tracks and ties are equally sharp in both pictures, but the higher frame rate makes the moving train sharper in the lower picture.

As this post shows, there is immersiveness, and there is sharpness (both spatial and temporal). Is there anything else that future imaging might bring? How about advances in color?

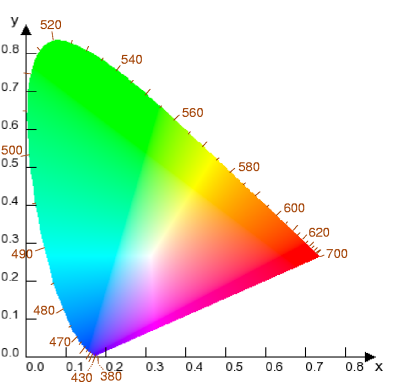

Ever since its earliest days, color video has been based on three color primaries. As this chromaticity diagram shows, however, human vision encompasses a curved space of colors, whereas any three primaries within that space define a triangle, excluding many colors.

Ever since its earliest days, color video has been based on three color primaries. As this chromaticity diagram shows, however, human vision encompasses a curved space of colors, whereas any three primaries within that space define a triangle, excluding many colors.

At Canon Expo, one portion of the new-technologies section was devoted to hand-held displays that could be tilted back and forth to show the iridescence of butterfly wings and other natural phenomena. The demonstration wasn’t to highlight the displays but a multi-band camera that captures six color ranges instead of three.

Then there was the Tsuzuri Project exhibit at Canon Expo (http://www.canon.com/tsuzuri/index.html). It was a gorgeous reproduction of an ancient Japanese screen. Advanced digital technology was used to capture and reproduce the detail of the original, but then a master gold-leaf artist used his talents to complete the copy.

I look forward to future tools based on what I saw at Canon Expo as well as the BBC’s high frame-rate viewing, Immersive Media’s camera system, and even the Philips autostereoscopic display. And I’m glad that human artists are still needed to use them.

Tags: 3D, autostereoscopic, BBC R&D, Canon, Canon Expo, Dimenco, Dodeca, immersive media, mixed reality, Philips, Tsuzuri Project, ultra-resolution,

No comments yet. You should be kind and add one!

The comments are closed.