Can You Fix It in Post?

Story Highlights

Can you fix it in post?

The simple answer is: Yes.

Consider Avatar. Not only was an entire world created and populated in computers, but even a human actor’s legs were atrophied in post. So, yes, anything can be fixed in post — given enough time and money. In the worst case, artists would simply “paint” photorealistic images, pixel by pixel and frame by frame.

It wasn’t always so, especially in electronic imagery. Before “paint” systems, post was extremely limited. Cuts, dissolves, wipes, and keys were possible, but, in the days of analog recorders, even those often degraded images. “We’ll fix it in post” became a laughter-inducing cliché.

Today, not even counting “painting,” there are many real-time processes that can replace production activities. Rather than having an image specialist controlling camera parameters during shooting, the raw signals from the sensors can be recorded, with a post-production colorist dealing with them. Instead of optical filters in front of or behind the lens, post-production filters can achieve much the same effects. Instead of worrying about large, stable camera mounts or optical image stabilizers, producers can turn to post-production image stabilization.

And then there’s 3D.

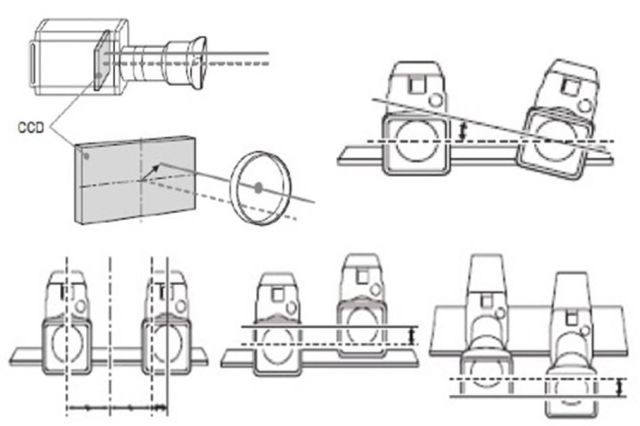

The images above are taken from the brochure for Sony’s MPE200 stereoscopic image processor. They show some of the post-shooting corrections the system can accomplish. At top left there is correction of inter-camera image center as a lens zooms, at top right correction of inter-camera rotation, and, at bottom, from left to right, correction of interaxial spacing, inter-camera elevation, and even inter-camera distance from the scene.

Let’s start with the interaxial-spacing adjustment. It can move homologous points in the two eye views closer together or farther apart. Unfortunately, that’s not the only difference between the two camera views.

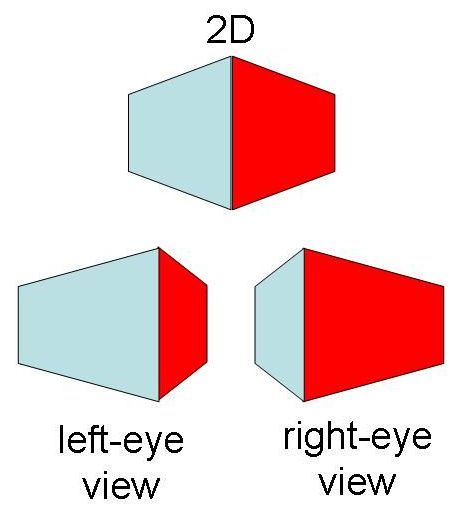

The image at top above is what a single camera might see when shooting an edge of a cube or building. Below it are the views of separated eyes. The left eye (or left camera) sees more of the left side of the object; the right sees more of the right side. Depending on the exact positioning, shooting distance, and object, one camera might even see things that the other doesn’t. There’s no way that Sony’s MPE200 — or anyone else’s post-production processor — can know how to put things into the picture that weren’t there in the original.

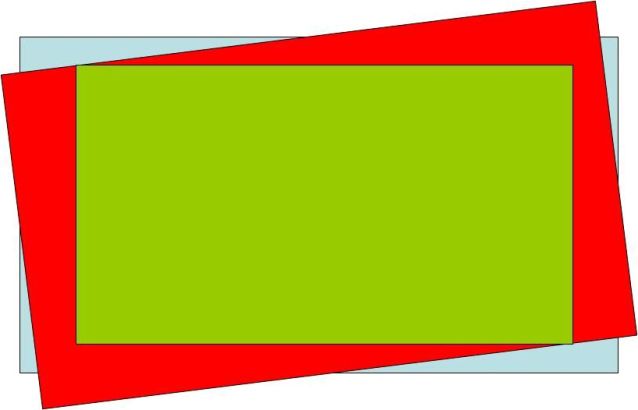

Now consider some of the other corrections, like that of inter-camera rotation. An HDTV frame is a 16:9 rectangle. If one camera’s rectangle is rotated with respect to another’s, as shown above in the blue and red rectangles, the only way to get them to line up is to trim the content of each, as shown in the green rectangle. That changes the original framing.

It’s not just a 3D problem. With a stable mount or optical image stabilization, what the shooter sees is (not counting overscan or intentional changes in post) what the viewer sees. With post-production image stabilization, it can be very different.

Have a look at the second (Mounts — the problem), third (Mounts — fixed in post), and fourth (Mounts — not exactly fixed) files available on this download page: http://schubincafe.com/blog/2010/06/things-you-can-or-can’t-fix-in-post-video-acquisition/. They were provided by Aseem Agarwala of Adobe Systems, and they demonstrate the tremendous power of post-production image stabilization.

The first clip is an example of a horribly unstable image, shot with a handheld camera. The second clip shows the post-processed result — so smooth that it appears to have been shot by an experienced crew with a camera mounted on a dolly on track.

The third clip, however, shows the original and the stabilized versions together. There’s no question that the image has been marvelously stabilized, but the framing is so different that the second story of the building in the background disappears completely in the corrected version.

It’s not just framing. If there’s any process that can be perfectly duplicated in post, it’s the adjustment of the color parameters of the signal produced by a camera’s image sensors. As long as all of the information is recorded, it makes no difference from a technical standpoint whether the adjustments are made at the camera or in a colorist’s suite. Unfortunately, there are standpoints other than technical.

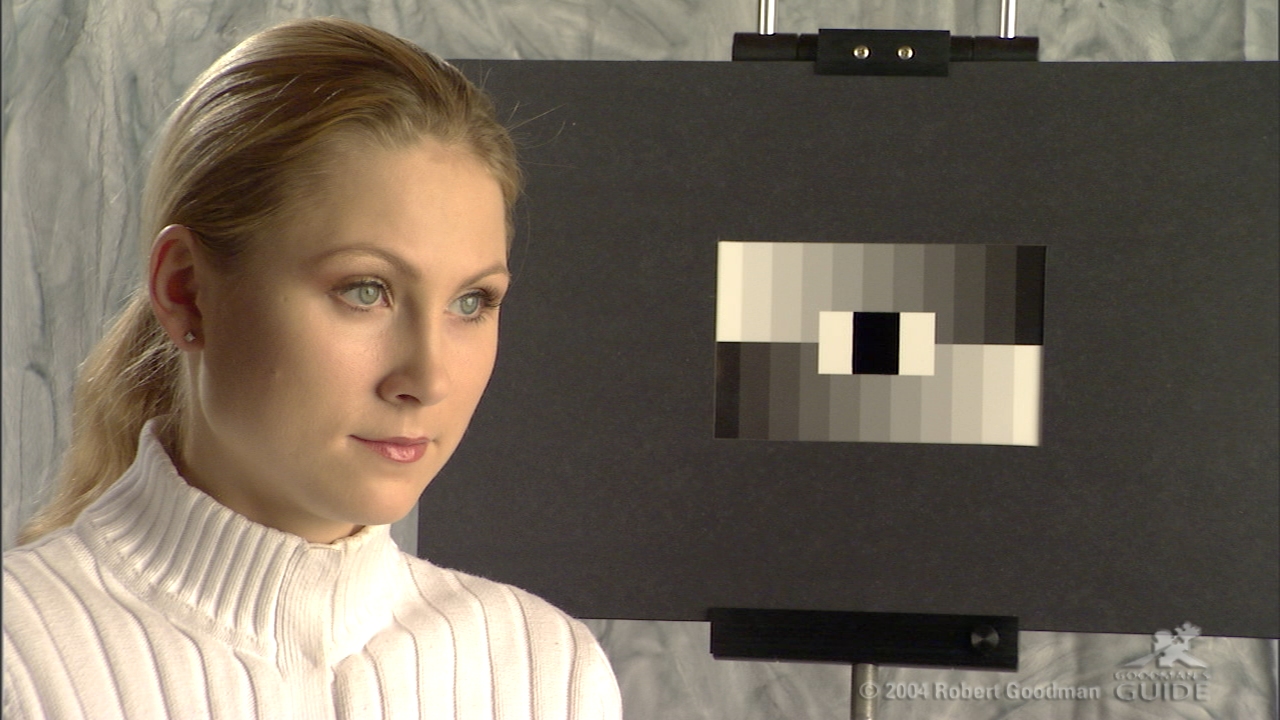

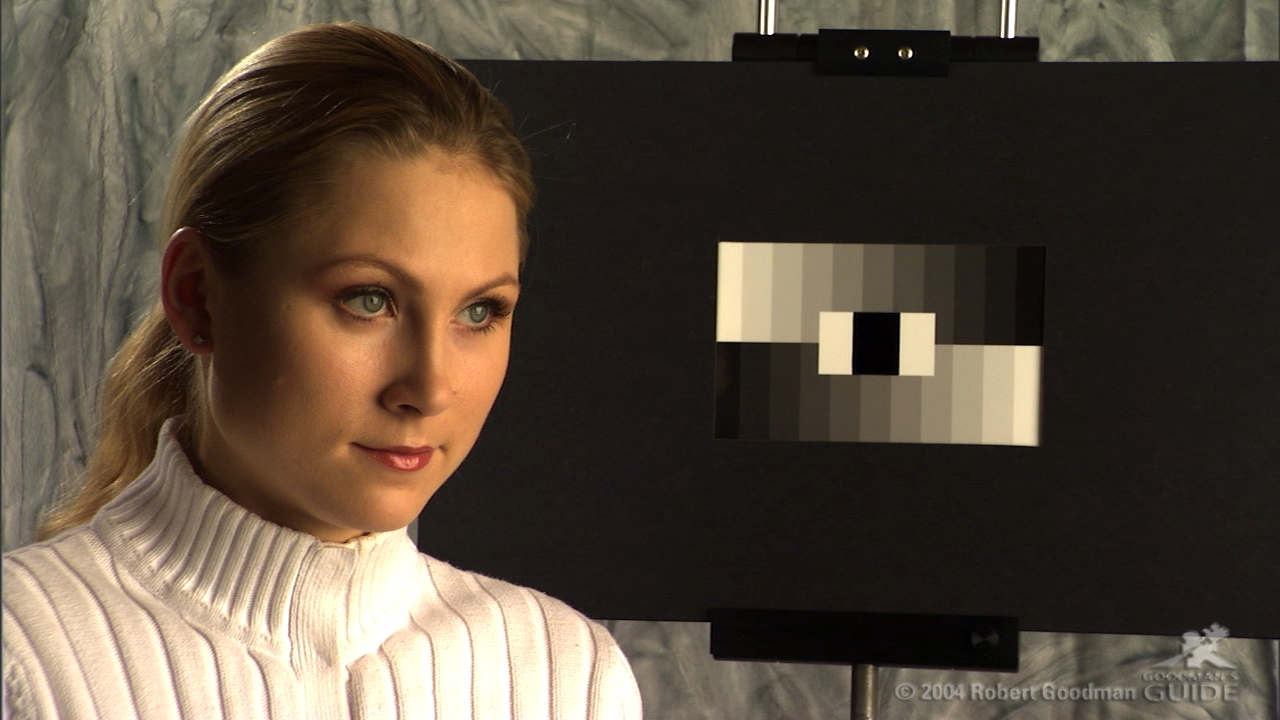

The two pictures shown above are taken from the book Goodman’s Guide to the Panasonic Varicam by Robert Goodman, AMGMedia Publishers, 2004, http://www.goodmansguide.com/theseries.html (and here’s a link to Goodman’s own site: http://www.histories.com/hjemmeside.html). The upper picture has the master gamma set to .35; in the lower picture, it’s .75.

Neither is necessarily better, and neither is necessarily “right.” They are simply different.

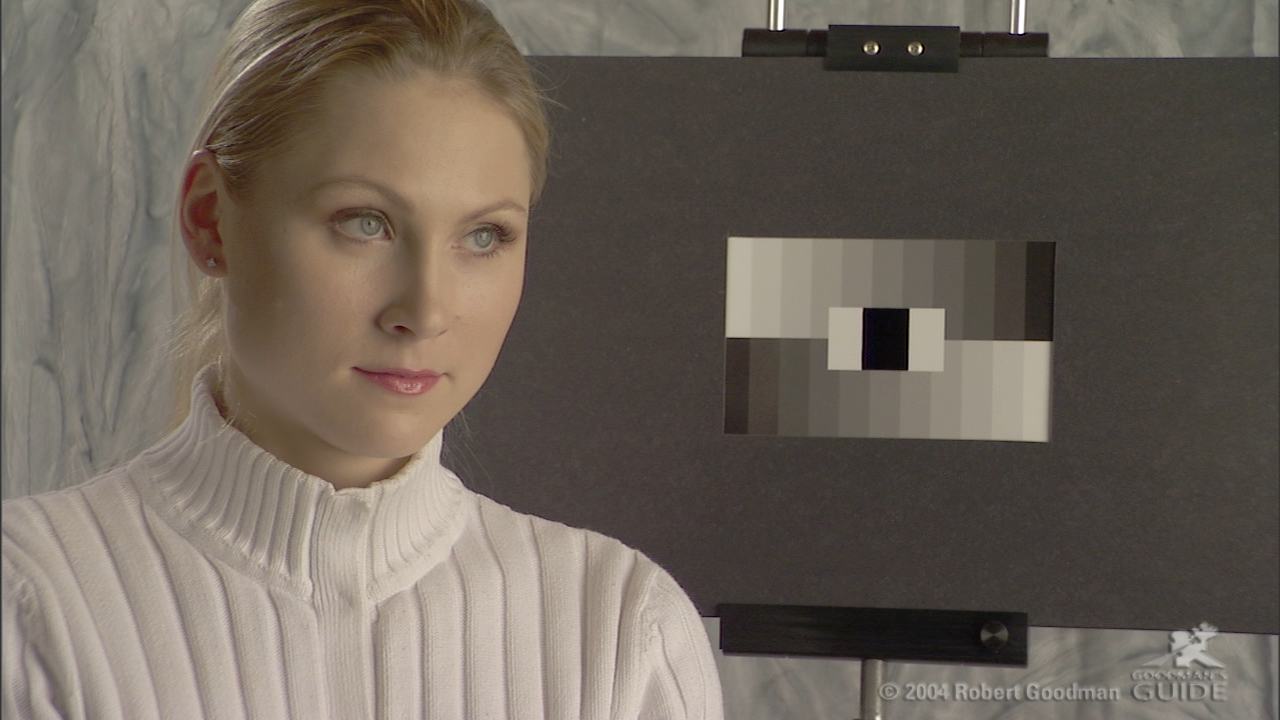

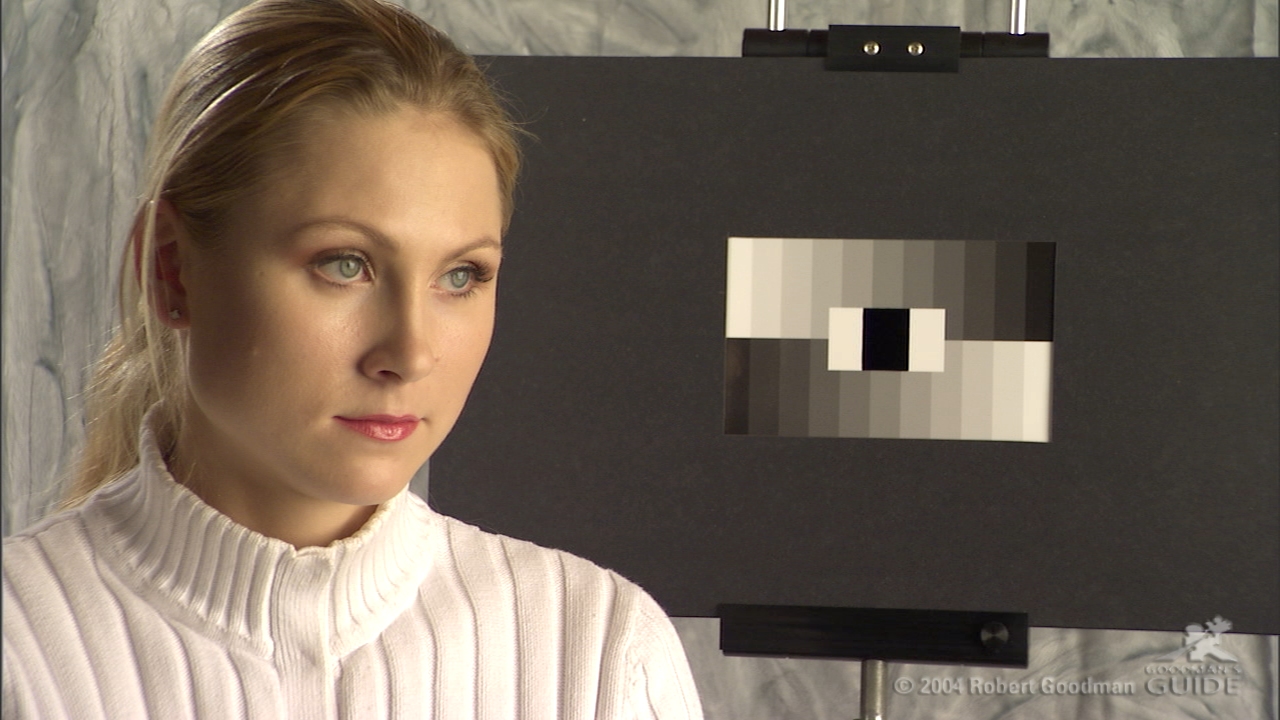

Above is another pair of images from the same book. The upper one has dynamic level set to 500; the lower is at 200. Notice that all of the detail of the collar is easily seen in the 500. On the other hand, the face seems desaturated. Again, neither is necessarily good or right. But there are major differences between these four pictures (there are even more image pairs in the book, demonstrating other parameters).

If the adjustments were made in production, the director might have liked some characteristics of the image (say, the collar detail) but not others (say, the desaturated face) and changed things to compensate (different lighting, makeup, or clothing, for example). In post, the video parameters can be changed at will, but the lighting, makeup, and clothing remain the same, unless, of course, pixel-by-pixel and frame-by-frame an artist (or, more likely, a team of artists) repaints the images as they might have been captured in the first place.

If you have enough money and time, you can do anything in post. For the rest of us, it’s a good idea to try to achieve desired looks in production.