Late–and see?

Story Highlights

Here are two facts to ponder: First, there is no such thing as perfect lip sync. Second, at Super Bowl parties across the country this coming February, many–if not most–TVs will have their sound turned off. But, first, given the upcoming U.S. Presidential inauguration, consider a different one, the inauguration of Warren Harding on March 4, 1921.

Here are two facts to ponder: First, there is no such thing as perfect lip sync. Second, at Super Bowl parties across the country this coming February, many–if not most–TVs will have their sound turned off. But, first, given the upcoming U.S. Presidential inauguration, consider a different one, the inauguration of Warren Harding on March 4, 1921.

The inauguration of James Buchanan in 1857 was the first known to have been photographed. That of William McKinley in 1897 was the first shot by a movie camera. Theodore Roosevelt’s in 1905 was the first to have a telephone line for fast reporting. Calvin Coolidge’s in 1925 was the first broadcast nationally on radio. Herbert Hoover’s in 1929 was the first captured as a sound movie. Harry Truman’s in 1949 was the first televised. Bill Clinton’s, in 1997, was the first streamed. But Harding’s (left) was the first with amplified sound. It was, therefore, the first at which those at the edge of the crowd had a hope of hearing the speeches.

The inauguration of James Buchanan in 1857 was the first known to have been photographed. That of William McKinley in 1897 was the first shot by a movie camera. Theodore Roosevelt’s in 1905 was the first to have a telephone line for fast reporting. Calvin Coolidge’s in 1925 was the first broadcast nationally on radio. Herbert Hoover’s in 1929 was the first captured as a sound movie. Harry Truman’s in 1949 was the first televised. Bill Clinton’s, in 1997, was the first streamed. But Harding’s (left) was the first with amplified sound. It was, therefore, the first at which those at the edge of the crowd had a hope of hearing the speeches.

At Barack Obama’s inauguration in 2009, there were not only amplified words but also “amplified” (enlarged and distrubuted) pictures for the gigantic crowd. And that’s a problem, because sound and light don’t travel at the same speed.

At Barack Obama’s inauguration in 2009, there were not only amplified words but also “amplified” (enlarged and distrubuted) pictures for the gigantic crowd. And that’s a problem, because sound and light don’t travel at the same speed.

Some of those distributed pictures are depicted at right in a portion of a copyrighted image by photographer Robert McNeely taken from the “A Thousand Words” Kodak blog <http://1000words.kodak.com/thousandwords/post/?id=2316071>. To the right of the screen, speakers can be seen.

In the black-&-white image above left, Harding, if he can be seen at all, is barely more than a dot. In the color image above right, Obama’s face on the big screen is clearly visible as a close up. Anyone who can see that face sees it essentially instantaneously; in the duration of a single U.S. television frame, light travels well over 6,000 miles through a vacuum (and not much slower through air). Sound, however, at the 28-degree air temperature during the Obama inauguration, would have traveled about 36 feet during that same frame.

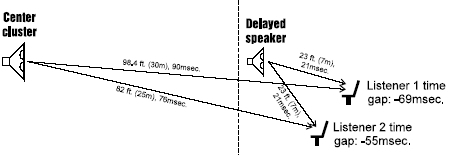

When sound is distributed via multiple speakers, delays are typically introduced to deal with the distance that sound has to travel. In the example illustrated above, from the Group Technologies e-magazine Filter <http://www.gtaust.com/filter/08/07.shtml>, a center cluster of speakers above a stage carries live sound, and the feeds to speakers under a balcony are delayed so that their sound arrives at the same time. When enlarged and distributed pictures are added, however, things get more complicated.

At right is just one of those Obama-inauguration screen-speaker combinations. If the video were delayed to match the sound, someone standing just in front of the screen would have seen what appeared to be good lip sync. Someone standing 72 feet farther back would have heard the sound a couple of frames later but wouldn’t necessarily have seen that as poor lip sync.

At right is just one of those Obama-inauguration screen-speaker combinations. If the video were delayed to match the sound, someone standing just in front of the screen would have seen what appeared to be good lip sync. Someone standing 72 feet farther back would have heard the sound a couple of frames later but wouldn’t necessarily have seen that as poor lip sync.

Our brains have learned that sound from distant images should be late; we think nothing, for example, of thunder arriving seconds after a lightning flash. That’s one reason there’s no such thing as perfect lip sync. When standing right in front of someone, we expect sound at one latency; watching a speaker at a lectern from 50 feet away, we expect a different sound latency. Proper lip sync in a living room is different from proper lip sync in a cinema auditorium. And, therefore, even simply cutting from a wide shot to a close-up can make lip sync appear to change.

Now consider the Obama inauguration again. Screens can be viewed only from the front. Speakers can be heard, however, even from behind. Someone standing behind the screen at the left would watch the closer screen seen to its right. But that viewer would still be hearing sound from the speakers to the rear. The closer the viewer gets to the screen in front, the worse the lip sync from those rear speakers would appear to be.

Now consider the Obama inauguration again. Screens can be viewed only from the front. Speakers can be heard, however, even from behind. Someone standing behind the screen at the left would watch the closer screen seen to its right. But that viewer would still be hearing sound from the speakers to the rear. The closer the viewer gets to the screen in front, the worse the lip sync from those rear speakers would appear to be.

In home TV viewing, there shouldn’t be such an issue, if all is well designed, but it’s not just acoustic delay that affects lip sync. From the first television patent until the digital age, television was a synchronous medium. Whatever was seen by the television camera was displayed simultaneously on the TV screen except for the addition of tiny transmission delays (even with completely different transmission technologies used for pictures and sound, the lip-sync error coast-to-coast was much less than one frame).

In home TV viewing, there shouldn’t be such an issue, if all is well designed, but it’s not just acoustic delay that affects lip sync. From the first television patent until the digital age, television was a synchronous medium. Whatever was seen by the television camera was displayed simultaneously on the TV screen except for the addition of tiny transmission delays (even with completely different transmission technologies used for pictures and sound, the lip-sync error coast-to-coast was much less than one frame).

Even after the introduction of videotape, what viewers saw was synchronous to the tape playback. But then came uneven processing of pictures and sounds. Satellites delayed both equally, but a satellite feed from a country using a different frame rate required picture processing that delayed the video but not the audio. Frame synchronizers for remote feeds introduced still more video delay (or latency). Digital video effects introduced yet more.

Perhaps the greatest latencies were introduced by bit-rate reduction systems used for recording and distribution, more commonly called compression. Television changed from synchronous (happening at the same time) to isochronous (happening in the same amount of time but not necessarily simultaneously). That seems okay in a well-designed system. Who cares if pictures and sounds that are in lip sync happen at one moment or a few frames later?

For most home viewing, it doesn’t matter. For some, it does. At left is a photo taken in Peter Putman’s basement during his 2003 Super Bowl viewing party. It originally appeared in this Home Theater article about the party: <http://www.hometheater.com/content/your-face-football>. Putman is famous for his Super Bowl parties; they’ve even been covered in the news. Why? It’s because he provides at least one TV screen per room–including the bathroom–and sometimes even outdoors.

For most home viewing, it doesn’t matter. For some, it does. At left is a photo taken in Peter Putman’s basement during his 2003 Super Bowl viewing party. It originally appeared in this Home Theater article about the party: <http://www.hometheater.com/content/your-face-football>. Putman is famous for his Super Bowl parties; they’ve even been covered in the news. Why? It’s because he provides at least one TV screen per room–including the bathroom–and sometimes even outdoors.

If a TV outdoors shows the Super Bowl in lip sync three frames later than one in a bathroom, who cares? But if one on one side of a room is even just one frame different from one on another side of the same room, the difference in audio latency can drive viewers out of the room. The solution is simple: Turn off the sound on all but one of the TVs.

That doesn’t necessarily solve all problems. Below is the monitor wall of the main control room of All Mobile Video’s Titan mobile production truck when it was built. Like many these days, it uses large LCD monitors (which have latency) fed by multi-view processors (which also have latency). It also uses both LCD monitors without multi-view processing and latency-free picture-tube monitors. Even if an extraordinary number of video delays were used to bring all of the images into time synchronization and audio delays used to maintain lip-sync, the sound heard on camera-operator headsets via the control-room intercom would then be out of sync with the live action.

Titan is the production truck used by The Metropolitan Opera: Live in HD, the number-one alternative content for cinemas worldwide (currently seen in 64 countries). The Met also presents its opening night each year free on the plaza in front of the opera house and in Times Square, where New York City shuts Broadway down so thousands of people can watch the opera on the many screens on surrounding buildings (two in 2006 are shown below).

Normally, the Times Square screens are silent, but opera demands sound, so in this case the multiple screens are delayed to make them simultaneous with that with the longest latency, and two viewing areas are set up with sound. The two areas are several blocks apart, so there’s no worry about hearing both at the same time. The lip-sync seems the same whether viewing from 44th Street or 47th Street.

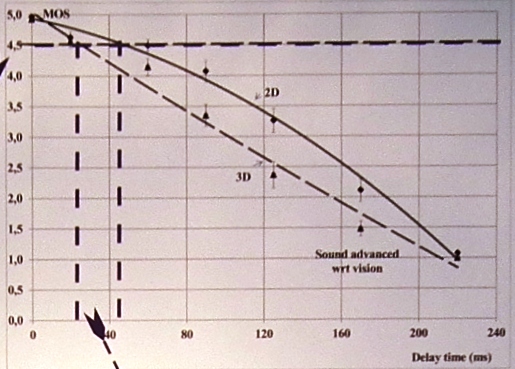

How good does single-TV lip sync have to be in a home? ITU-R recommendation BT.1359-1 indicates a threshold of detectability of about +45 milliseconds (audio before video by about 1.5 U.S.-standard frames) to -125 ms and a threshold of acceptability of +90 to -185 ms. But research (one graph shown below) revealed by the St. Petersburg State University of Film and Television in Russia at the International Broadcasting Convention in Amsterdam in September shows that, when active-shutter LCD glasses for 3D viewing are used, the threshold of detectability drops to just +27 ms, less than one U.S. television frame. For more information on this research, please contact the head of the video systems department, Konstantin Glasman, [email protected].

It’s not just lip sync. Consider Amsterdam’s Music Theatre (below), home of that city’s main ballet and opera companies. It is one of the most technologically advanced, equipped with high-definition cameras, fiber-optic transmission systems, and computer lighting control systems built into each hanging truss. There’s even a robotic wireless-intercom distribution system.

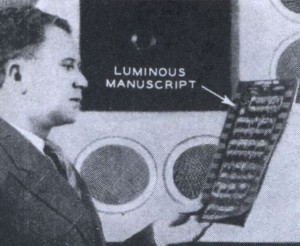

Both ballet and opera use an orchestra led by a conductor, and, for both, it’s sometimes important for the dancers and singers to be cued by the conductor. For centuries, the solution was for the performers to look directly at the conductor, with, perhaps, an occasional mirror in use. In 1928, opera-conductor Fritz Reiner came up with another idea, a television camera shooting the conductor, with monitors to convey his image. At right is a reason why it’s good that the idea didn’t catch on immediately. TV cameras at the time sometimes required darkened studios so a scanning light beam could be detected by photocells. To make it visible, music was printed in radium on black paper.

Both ballet and opera use an orchestra led by a conductor, and, for both, it’s sometimes important for the dancers and singers to be cued by the conductor. For centuries, the solution was for the performers to look directly at the conductor, with, perhaps, an occasional mirror in use. In 1928, opera-conductor Fritz Reiner came up with another idea, a television camera shooting the conductor, with monitors to convey his image. At right is a reason why it’s good that the idea didn’t catch on immediately. TV cameras at the time sometimes required darkened studios so a scanning light beam could be detected by photocells. To make it visible, music was printed in radium on black paper.

By the 1960s, the conductor camera was widely used, not only in TV studios but also in opera houses. At left is one of the many backstage conductor monitors used at the Metropolitan Opera House; others are placed in front of the stage in locations that can’t be seen by the audience. No matter where a singer looks, an image of the conductor is visible.

By the 1960s, the conductor camera was widely used, not only in TV studios but also in opera houses. At left is one of the many backstage conductor monitors used at the Metropolitan Opera House; others are placed in front of the stage in locations that can’t be seen by the audience. No matter where a singer looks, an image of the conductor is visible.

The “new” Metropolitan Opera House opened in 1966, so it should come as no surprise that its conductor monitors use picture tubes. The opera festival held in a 15th-century castle in Savonlinna, Finland has picture-tube-based conductor monitors, too (as shown at right).

The “new” Metropolitan Opera House opened in 1966, so it should come as no surprise that its conductor monitors use picture tubes. The opera festival held in a 15th-century castle in Savonlinna, Finland has picture-tube-based conductor monitors, too (as shown at right).

Today, either fortunately or unfortunately, we live in the era of flat-panel TVs, and most of those have some latency, not great for musical cueing. So a broad range of different types of displays had to be investigated before the Amsterdam Music Theatre was able to find one with acceptable latency (of course, even newer technologies, such as OLED, offer lower latencies).

Perhaps, someday, possibly through direct stimulation of the visual and aural cortices of the brain, we’ll be able to get around latency issues. In the meantime, strive for zero, and be prepared to work with other numbers.